ML Engineer Interview Services

Rigorous evaluation for candidates who build, train, and ship machine learning systems.

What we evaluate

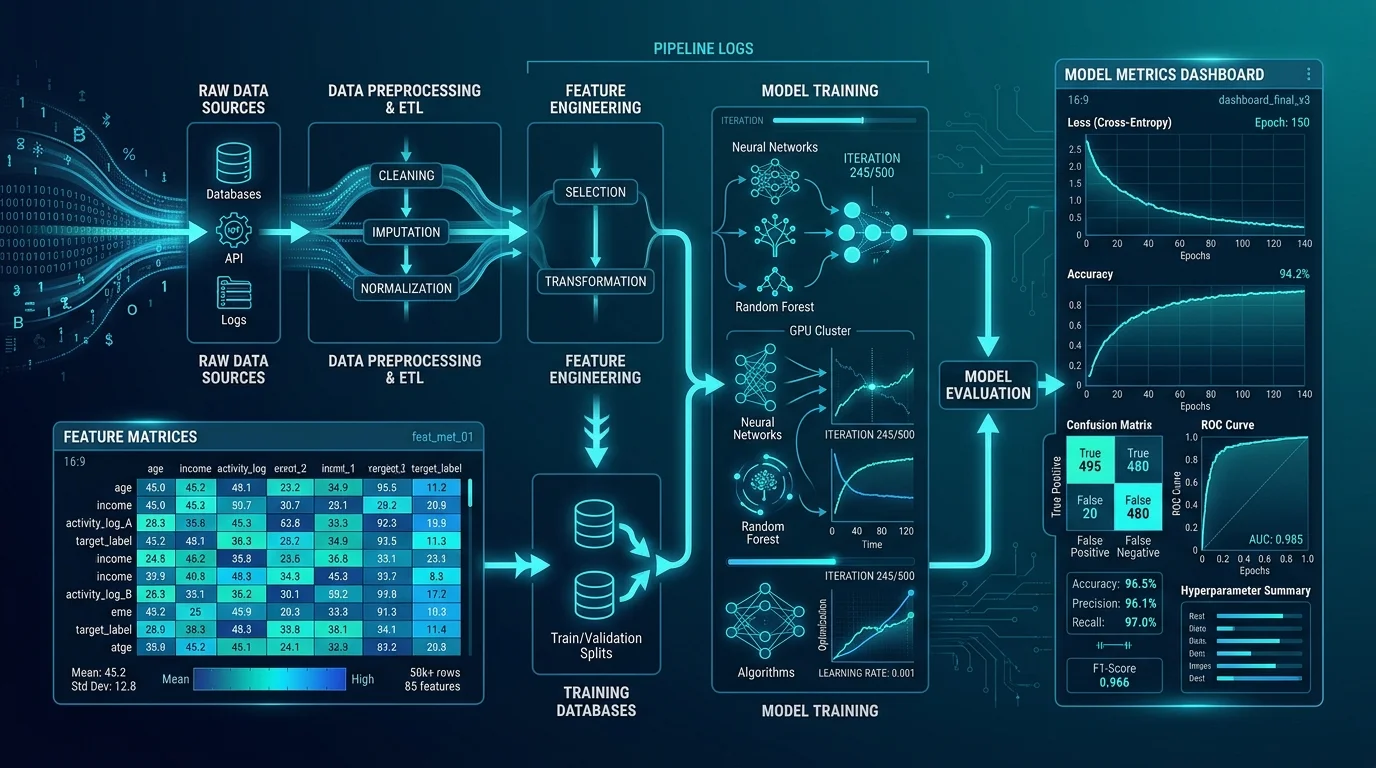

ML engineers build the infrastructure that takes machine learning from research to production — feature pipelines, training workflows, experiment tracking, model serving, and the deployment systems that keep models performing in the real world. Our ML engineer interviews assess practical ML engineering competency across the full model lifecycle, not just theoretical knowledge of algorithms.

Model training & optimization

- Training pipelines

- Hyperparameter tuning

- Regularization techniques

- Gradient descent variants

- Distributed training

Feature engineering & pipelines

- Feature stores

- Data preprocessing at scale

- Online vs offline features

- Feature drift detection

- Pipeline orchestration

Experiment tracking & evaluation

- Experiment management (MLflow, W&B)

- Metric selection

- Offline vs online evaluation

- A/B testing for ML

Model serving & infrastructure

- Model deployment patterns

- Latency and throughput trade-offs

- Batch vs real-time inference

- Model versioning

ML system design

- End-to-end ML system architecture

- Data and model feedback loops

- Monitoring and alerting

- Retraining strategies

How this role differs from adjacent roles

vs. AI Engineer

ML engineers build and maintain custom-trained models — they work deeply on training infrastructure, feature pipelines, and model lifecycle management. AI engineers primarily integrate pre-trained foundation models (LLMs, etc.) into products. The ML engineer's output is a trained artifact; the AI engineer's output is an application or feature built on top of existing models.

vs. Data Scientist

ML engineers are responsible for productionizing models and keeping them running reliably at scale. Data scientists are responsible for the research, experimentation, and statistical rigor that identifies which models and approaches are worth building. The ML engineer takes the data scientist's work and makes it production-grade.

Interview format

ML system design

Candidate designs an end-to-end ML system — from data ingestion through model serving. We assess architecture thinking, scalability awareness, and operational maturity.

Technical depth

Questions on training pipelines, feature engineering, model evaluation, and serving infrastructure — calibrated to the seniority level.

Practical judgment

Scenario-based evaluation of debugging model regressions, handling data quality issues, and managing production incidents.

What you receive

- Structured scorecard with role-specific competency ratings

- Specific evidence from the interview for each evaluated area

- Clear hire / no-hire recommendation with supporting rationale

- Narrative summary of technical performance

- Optional written debrief for stakeholder sharing

Ready to hire with more confidence?

Get a structured technical evaluation delivered by a practitioner who knows the domain — not a generic screener.